One month before testnet, the most dangerous thing I could do is add features.

Public networks do not fail because the idea is wrong. They fail because the implementation trusted the world to behave. The world does not. The world is messy, adversarial, and creative in ways you do not control. It sends malformed inputs, strange encodings, oversized payloads, borderline timestamps, and “impossible” combinations of parameters. If your node crashes, hangs, or behaves inconsistently under that pressure, the network does not forgive you. It simply becomes untrustworthy.

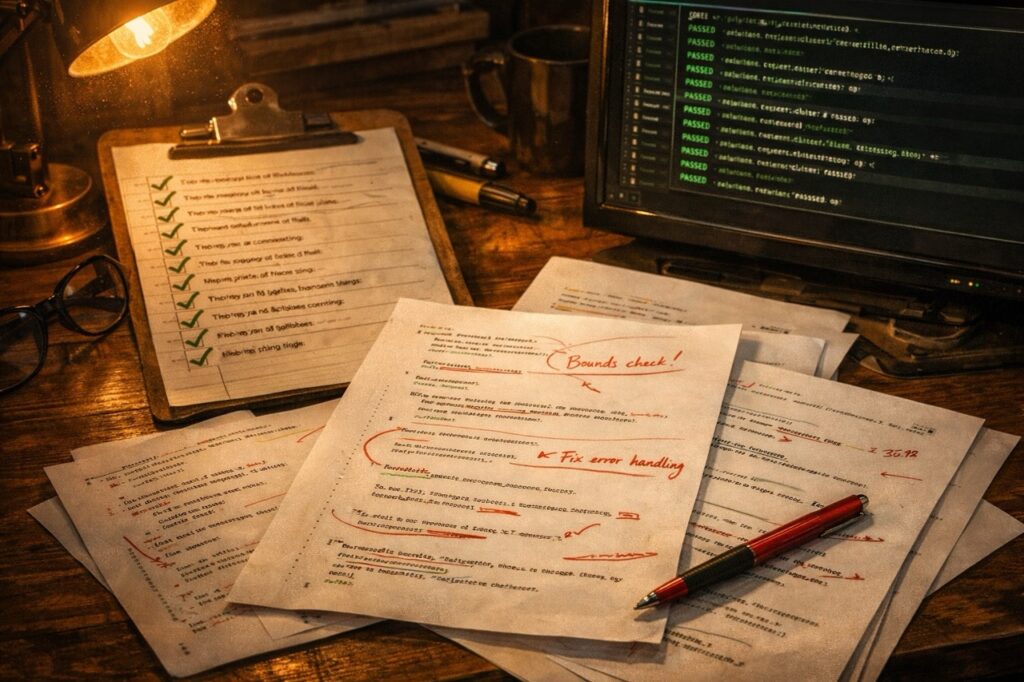

So this phase is unglamorous by design: code hardening.

The audit list is real, not theoretical. It is a set of patterns that are acceptable in a private lab and unacceptable in a public system. Unsafe array access where .Value()[0] assumes “non-empty” without verifying. Empty catch blocks that swallow exceptions, leaving you with a process that continues running but no longer tells the truth. Unsafe string-to-integer conversions via stoi/stoul that can throw and terminate when fed unexpected values. API parameter reads that assume keys exist and explode on missing data. Path parsing that uses .at() and turns a client mistake into a server crash. These are not “edge cases.” These are the attack surface of the real world.

The fixes are boring, which is perfect. Bounds checks everywhere. Explicit “is empty?” checks before accessing. Converting silent catches into structured error reporting with clear context and consistent error codes. Replacing exception-prone parsing with safe helpers that return Result types, so errors become values, not explosions. Rewriting handlers so malformed parameters return a precise 400 response with a deterministic message instead of a stack trace. Adding caps where size can be abused. Ensuring logs never leak sensitive details while still being useful when things go wrong.

In parallel, I expanded test coverage in places that matter for public exposure. Tests that validate archival data retention and correctness across reorg scenarios. Database concurrency checks under load, not only “single thread works.” Merkle proof validation tests, because proof verification is a trust boundary and must be correct byte-for-byte. Installer validation on a fresh VPS, because “it works on my machine” is not an acceptable deployment strategy.

None of this produces screenshots. None of this produces marketing material. But this is the difference between a testnet that people can safely touch and a testnet that teaches everyone the wrong lesson. A network that crashes on bad input is worse than no network at all, because it destroys the only asset that takes longest to build: trust.

A testnet is not a demo. It is a contract with reality. This is me earning the right to sign it.